Delivering AI on Edge

Production-grade Edge AI solutions for enterprises that need real-time decisions, lower cloud costs, and secure on-premise processing.

* Vision analytics for manufacturing quality and safety on edge cameras.

* Smart retail shelves and in-store analytics without streaming video to the cloud.

* Edge LLM assistants embedded in vehicles and industrial equipment for hands-free operations

From model idea to fleets of edge devices, Mobodexter manages the full lifecycle so your team can focus on the application

Train & Optimize

We help you choose or build the right model, then optimize with techniques such as pruning, quantization, and hardware-aware tuning to meet your device constraints.

Result: Higher accuracy at lower latency and power on real edge hardware.

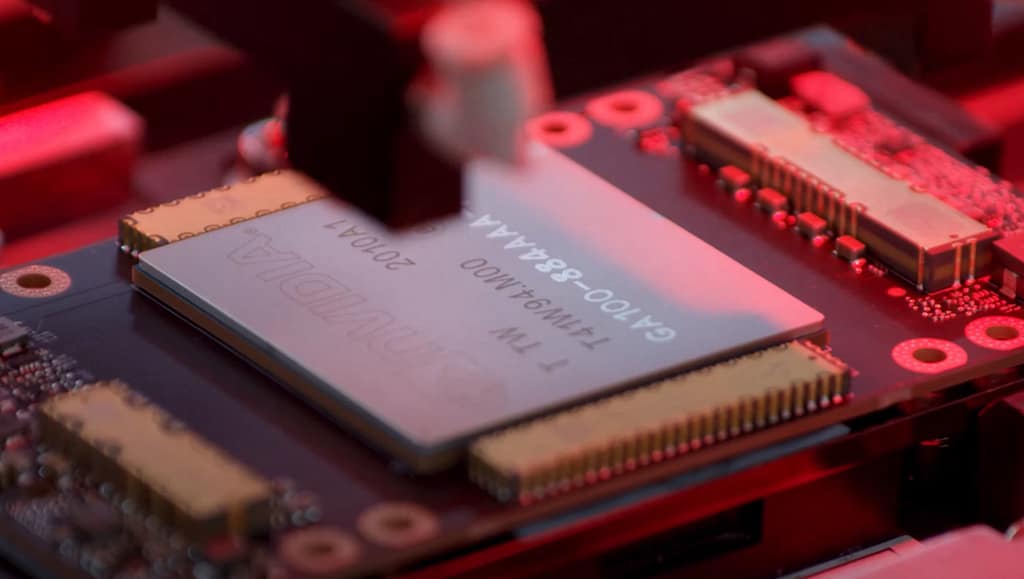

Mobodexter designs and validates edge AI stacks on NVIDIA, Intel, and AMD so you get the best performance for your workload, not just a generic reference design

Choose Compute Architecture

- NVIDIA for vision-heavy AI

Ideal for GPU-accelerated computer vision and deep learning on devices like Jetson and data center GPUs.

We provide tuned containers, TensorRT-optimized models, and deployment playbooks for NVIDIA-based edge fleets. - AMD for flexible GPU compute

Suitable for organizations standardizing on AMD GPUs and CPUs for cost-effective edge compute.

We help you validate performance and reliability on AMD-based edge servers for your specific AI stack - Intel for CPU-centric analytics

Best for mixed workloads that combine traditional analytics with AI using CPUs, iGPUs, and accelerators with OpenVINO.

We optimize inference pipelines to fully exploit Intel architecture while keeping power and cost in check.

Not sure which way to go? Share your workload and we’ll recommend the optimal hardware + software stack

Choose Computing

Deploy EdgeSage on proven edge server platforms from Dell, HPE, and Lenovo for reliable, secure, and manageable edge AI at scale.

What’s included:

- Pre-integrated hardware + EdgeSage software stack for AI workloads.

- Secure connectivity and remote management for multi-site edge fleets.

- Implementation, performance tuning, and ongoing support services.

Deployment patterns:

- Single-site edge server for factory or warehouse analytics.

- Multi-site fleets across retail stores or branches, centrally managed.

- Hybrid setups where models are trained in the cloud and deployed at the edge.

Cut bandwidth and cloud costs while meeting strict latency and data residency requirements with on-premise edge AI

Run Edge AI Models

Mobodexter delivers ready-to-run edge AI solutions built on CNNs, RNNs, lightweight architectures, anomaly detectors, and custom models

- Visual inspection & safety (CNNs, lightweight models)

Defect detection, safety compliance, and occupancy monitoring with camera-based analytics on edge devices. - Predictive maintenance (Anomaly detection models)

Detect unusual patterns in sensor data from machines and vehicles to prevent downtime and costly failures. - On-device assistants (RNNs, LLMs)

Voice and text assistants embedded in vehicles, kiosks, and industrial consoles for hands-free interaction. - Custom vertical solutions

We design custom edge AI models for your specific environment in manufacturing, retail, smart city, and more, then deploy them via EdgeSage.

Edge deployments have helped customers reduce data transfer to the cloud by up to 80% while improving responsiveness for critical decisions

Start Your Edge AI Project

Whether you are modernizing an existing product or launching a new edge-native solution, Mobodexter can join at any stage of your journey

SOLUTION DESIGN

Edge AI solution design and architecture for your devices and sites.

MODEL SELECTION

Model selection, optimization, and integration with your existing systems.

MODEL DEPLOYMENT

EdgeSage setup for deployment, orchestration, and monitoring across fleets.